Banking and Financial Institutions are building data lakes in the cloud for scalability, flexibility, ease of use, and eventually migrating data from on-prem data storage platforms, mainly Hadoop.

However, an organization with a proactive compliance posture wouldn't consider moving these data lakes without an added layer of encryption for enhanced security.

That said, the traditional solutions such as Transparent Data Encryption (TDE) or Application Layer Encryption (ALE) are not enough to meet the requirements of complex cloud environments. The data protection model comes with several interdependencies.

Let us walk through a few data fundamentals and understand how banks can solve the encryption challenge.

What is a data lake?

A data lake stores, processes, and secures large amounts of real-time data in its original form. Often data lakes and data warehouses are used in the same context. However, a data warehouse stores structured, filtered data that has been queried and categorized for specific purposes.

In simple terms, a data lake includes data in all formats such as audio, video, excel, pdf, images, etc., and one can pull any type of data at once from the vast pool. However, a data warehouse neatly groups data types, and one can access only a piece of data categorized for an exact query.

Why do banks use data lakes?

To stay competitive in the market, banks power their business intelligence by mining data and analyzing it for strategic and innovation purposes. They can create product relevance only if they process real-time data, which means they have to continuously record ongoing market trends, and customer behavior and consider compliance requirements to match their services for happy customer experiences.

For example, the bank can display exclusive card offers for customers browsing credit cards on the website, or they can instantly analyze customer profiles and offer or reject loans based on banking history.

A data lake is a centralized reservoir of live information that is timely, raw, and unfiltered, and every department in the organization can access it. The low-cost model, a cost-effective storage option, can scale at large.

Unlike when data is stored in a warehouse, data is filtered and structured in catalogs that different departments can access in silos. There's an additional cost involved for structuring and multiple security policies. Warehouses are ideal for operational account holders and can be synced with a customer relationship management (CRM) tool.

However, a data lake can be paired with a customer data platform (CDP) for predictive modeling, deep analysis, and long-term business strategies.

Why are banks moving data lakes to the cloud?

Amongst a plethora of other reasons, the data ecosystem built on the cloud is intuitive and does not require recruiting highly skilled professionals in-house for its security and management. For example, a bank must appoint data engineers supporting data scientists to manage a Hadoop platform on-prem. This need for a workforce means a high-scale interdependency and expenses.

Solutions from cloud providers such as Google, Microsoft, Amazon, and Oracle offer agile environments that can scale on demand and offer the capabilities to store, secure, process and pull data more efficiently and cost-effectively across different regions.

Why are the data security requirements?

- Compliance: ISO/IEC 27001, GLBA, GDPR, and PCI DSS, require banks to secure data at every level, and encryption is the most effective way. The regulations have underlined customer information such as personally identifiable information (PII), payment history, credit score, deposits, account numbers, etc., as critical. As a result, banks must strictly encrypt data in storage, transit, and in use to ensure there's no risk of its exposure. This way, banks can also significantly minimize the risk of data breaches.

- Encryption in Transit: Protects data when it moves from on-prem to the cloud or between two services. The data is encrypted before transmission, the endpoints are authenticated, and the data on arrival is decrypted and verified. TLS and SSL standards are widely used to protect data in transit.

- Control of Keys: Public cloud platforms allow organizations to encrypt big data in many ways. For example, Server-Side Encryption (SSE) has been the most convenient option, but it forces encryption keys to stay in the cloud. As a result, despite having the most stringent and ethical policies from the cloud vendors' end, one cannot rule out the vulnerability of unauthorized access if the encryption keys are not entirely under bank authority.

How Fortanix Helped a Global Investment Bank

Overview

A lead bookrunner with a reputed founders base, this global investment bank wanted to move its data lake backups (built on the Hadoop platform) from being hosted on-prem to being hosted in AWS S3 (Amazon Simple Storage Service). They wanted to do this for cost savings, including the cost of the backup media and the cost of managing the backups. AWS S3 is the largest and most performant object storage service for structured and unstructured data and the storage service of choice for backing up a data lake.

The benefit of using AWS S3 is that the bank can make copies of all uploaded S3 objects across multiple systems and store them. As a result, the bank can access the data whenever needed from various locations and protect it against failures, errors, and threats.

Requirement

The challenge was the bank's compliance team insisted on having an add-on layer of encryption and did not allow the backups to be moved from on-prem to AWS S3 without it.

In addition, the root encryption keys must be fully outside the public cloud.

The Solution

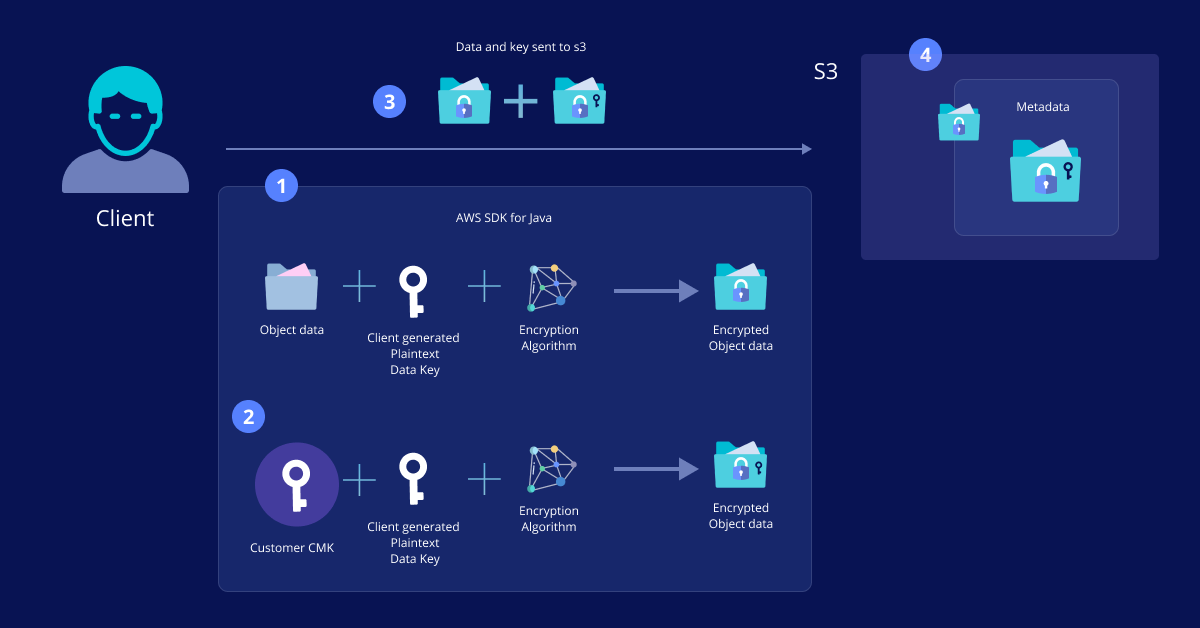

We positioned Fortanix Data Security Manager (DSM) as a solution leveraging CSE (client-side encryption). CSE, defined broadly, is encryption applied to data before it is transmitted from a system to a server.

AWS S3 offered the following ways to secure the bank data –

- SSE-S3 - Server-Side Encryption with S3 Managed Keys

- SSE-KMS- Server-Side Encryption with KMS Managed Keys

- SSE-C - Server-Side Encryption with Customer Provided Keys

- CSE-KMS - Client-Side Encryption with KMS Managed Keys

- CSE-C - Client-Side Encryption with Customer Provided Keys

Amazon S3 has complete information of the encryption keys during data transfer in all the above options except CSE-C, which enables AWS S3 users to have ownership of keys.

With Fortanix DSM, it was easy to configure CSE-C for AWS and Hadoop, and the data was successfully encrypted en route cloud.

Advantages of CSE-C with Fortanix Data Security Manager

- The bank achieved exclusive control of the data residency and permissions granted to the encryption keys. They can ensure that Amazon employees cannot access it.

- There is a distinct separation of roles between the bank, the cloud vendor, and Fortanix.

- There is a tamper-proof audit of all usage and management of the root keys inside a NIST-certified HSM or in an HSM service that is ISO27001 and SOC-II compliant.

- The non-technical staff, such as analysts and regulators, can review the correlation of logs to detect high-risk patterns.

- The compliance team can also check against the GDPR and Schrems II requirements.

- Any team that needs to decrypt data requires quorum consent.

- The extensibility of the architecture enables the bank to secure data sharing between different clouds and regions. They can centralize and modernize all cryptography across the bank infrastructure.

- Fortanix also offers Confidential Artificial Intelligence (CAI) in conjunction with Fortanix DSM, which enables the bank to secure the runtime in-memory footprint of the data loaded by machine learning (ML) or artificial intelligence (AI) algorithms.