With all the AI whirlwind, the fundamental concerns about data security, privacy, and compliance, as with any major technological breakthrough over the years, remain paramount. As data security and governance policies get ever tighter, it turns out there is another part of the AI workload that requires just as much protection, the AI model IP.

For every model owner, from industry giants to startups with best-in-class frontier models, AI intellectual property has become the ultimate crown jewel. This matters in areas like music analytics, where audience data, streaming performance, and proprietary insights also need to stay protected while AI systems process them. Protecting this core IP now requires the most rigorous security measures available.

To address the control over data security, privacy, and compliance, while also optimizing performance of their AI workloads, organizations are moving towards AI data centers, known as AI factories.

Those on-prem deployments, within the boundaries of the enterprises, are tightly governed and secured, all within the organization's control. In the meantime, model owners keep carefully guard access to their model IP.

The déjà vu here is that sensitive data will remain on premises, which means the models need to come on prem as well. But there is a lot of hesitation. AI factories are supposed to solve the security concerns; what is stopping the show?

The irony is that most AI factories now resemble the traditional castle-and-moat security architecture, concentrating sensitive workloads and data in one place. Talk about a sweet opportunity to steal jewels!

With AI Factories running on traditional infrastructure, which only secures data at rest and in transit, once the data is running, it is running unencrypted, and therefore vulnerable to prying eyes. What else is visible and explorable?

The model weights and biases, the critical AI IP. When not in use, the model will be sitting encrypted at rest and served over TLS, but during inference, the model is live.

What this means is that the weights are sitting unprotected in memory. Without hardware-based isolation, anyone with host-level access can perform a memory dump, effectively walking away with proprietary IP and sensitive data.

These vulnerabilities are exactly what causes CIOs and CISOs to pause large-scale AI deployments and why model owners hesitate to expose their business-critical IP in on-prem environments, even if it means missing out on substantial revenue opportunities.

So the burning question is: Is there a solution around data and AI model exposure that can unleash Enterprice AI adoption?

Why yes. The solution is called Fortanix Confidential AI.

At Fortanix, we are committed to solving the hardest problems in data and AI security. We pioneered Confidential Computing a decade ago to protect data and encryption keys in use. We have now extended our expertise directly to AI.

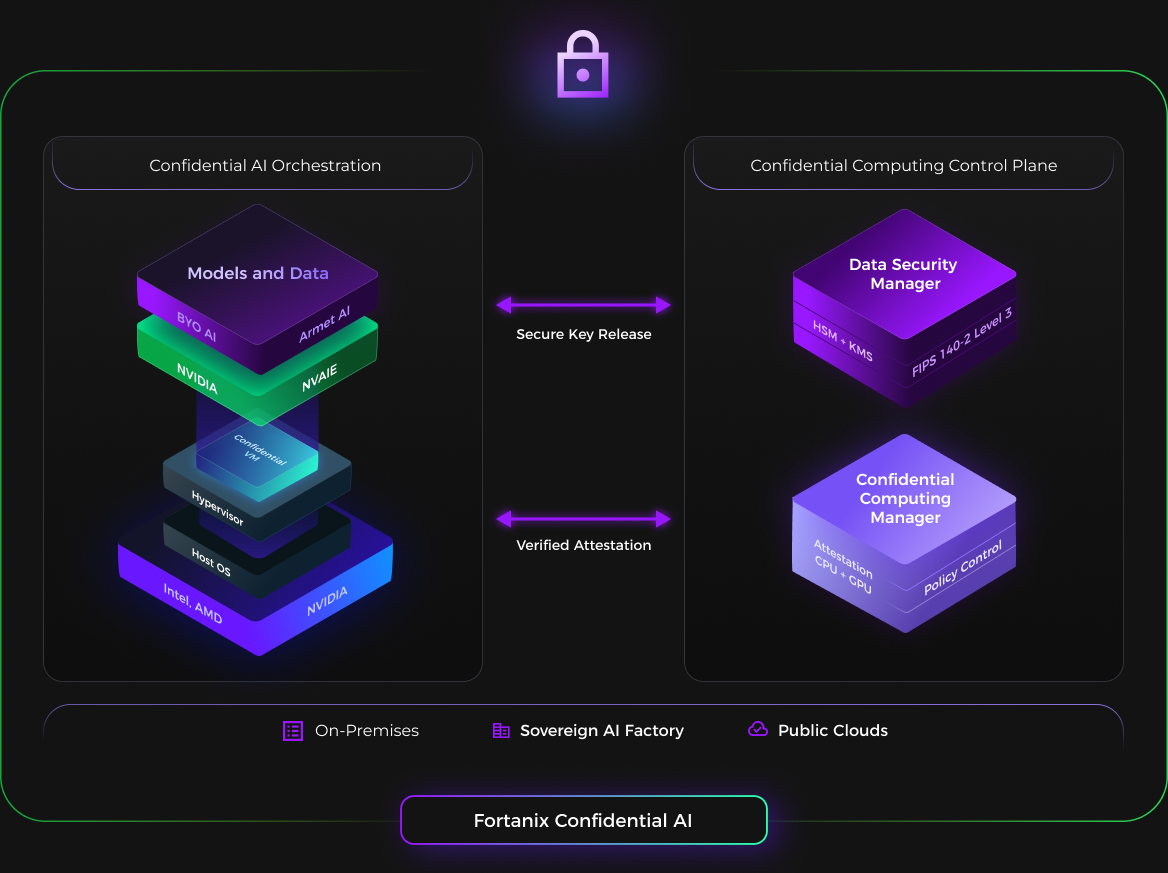

Fortanix Confidential AI builds on Confidential Computing, a hardware-based security technology that protects data, applications, and now AI models in use (during processing or computation) by isolating them in secure, encrypted GPU enclaves, also known as Trusted Execution Environment (TEE).

This means that sensitive workloads are isolated from the host system, including the operating system and hypervisor. What makes them trusted? Confidential Computing requires attestation. A cryptographic, hardware-backed process that proves that the environment is genuine, secure, and untampered with.

The verification applies to the hardware and the software, and only after all the checks pass, encryption keys are securely released to the TEE, allowing the AI workload to run safely and secure in the isolated environment, shielded even from privileged admins.

Fortanix Confidential AI takes Zero Trust to a whole new level and gives enterprises and model owners the needed assurance that memory encryption is active and working.

Built on NVIDIA’s Hopper and Blackwell GPUs, Fortanix Confidential AI addresses the biggest risk and barriers to AI adoption: model theft, data leakage, and compliance with data sovereignty and regulatory requirements.

Model owners now can deploy their IP into enterprise environments without fear of extraction or replication, while enterprise can safely run inference on sensitive or critical data.

With Fortanix Confidential AI, organizations now can run AI in production, with trust, security, and sovereignty at the core, knowing that even privileged insiders can't access their most valuable assets, the crown jewels.