For years, data centers haven’t needed much of an explanation. They exist to run applications, store data and keep systems online. As long as uptime was high and performance was predictable, the model worked.

AI has obliterated that assumption.

With many organizations moving beyond small pilots to running AI continuously, including training models, refining them and serving inference at scale, the underlying infrastructure matters in new ways. Compute behaves differently, data moves differently, and new risks emerge.

That shift has given rise to what many are now calling AI factories.

Understanding the differences between AI factories and traditional data centers isn’t just useful background knowledge. It’s a practical decision point for teams trying to figure out whether their existing infrastructure can support the kind of AI systems they want to build.

In this article, we’ll look at:

- What AI factories are designed to do

- How traditional data centers were originally built

- Where the two models diverge in the real world

- How AI factories change security expectations

Let’s dive in.

What Are AI Factories?

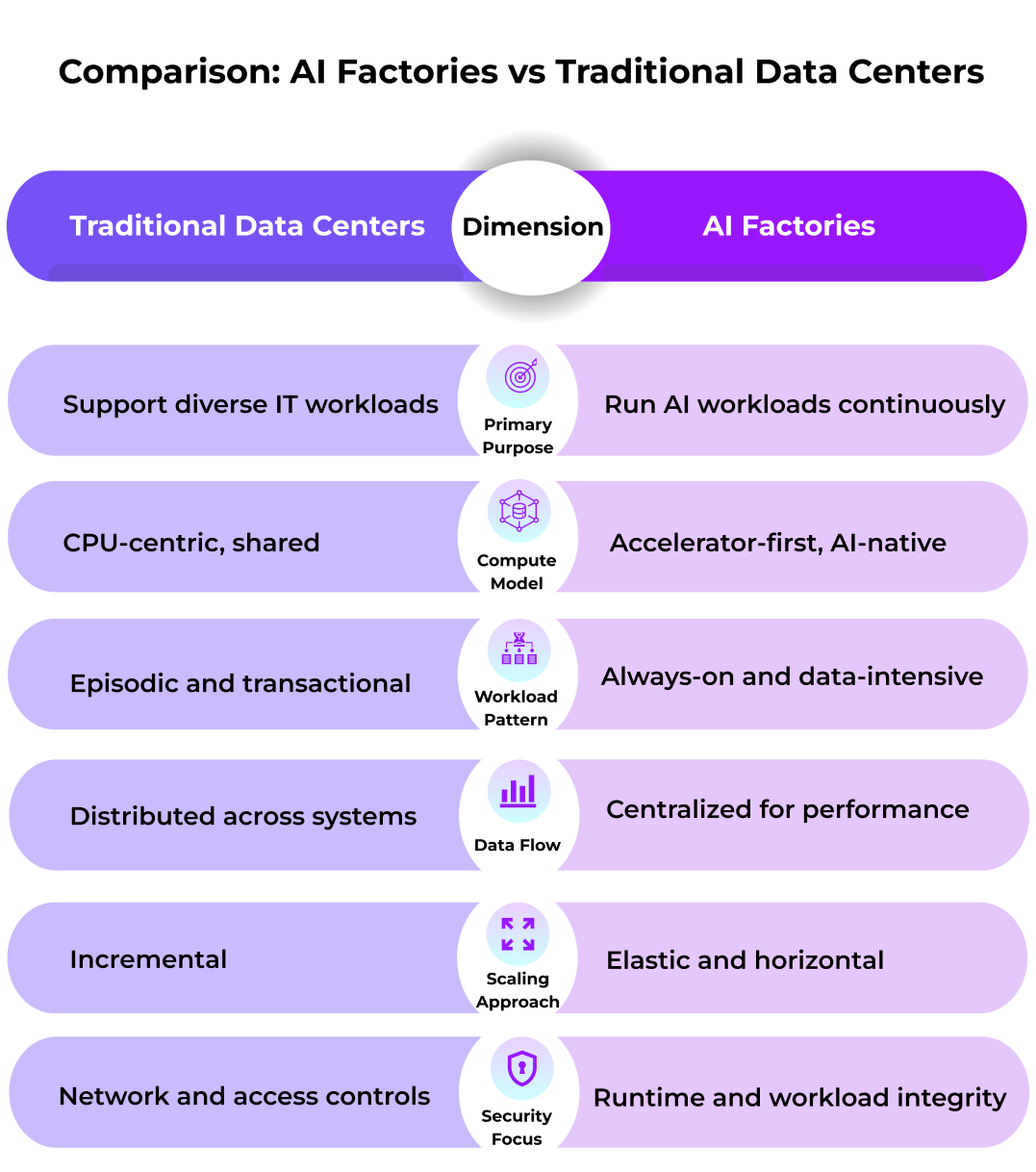

At the most basic level, an AI factory is a purpose-built environment designed to support the AI lifecycle. Think of preparing data and training models, then deploying them and running inference. Unlike traditional infrastructure, where AI is essentially treated as just another application, AI factories make AI the primary workload. Everything else, including compute, networking, orchestration and monitoring, exists to support that goal.

The term is frequently associated with NVIDIA AI factories, but the concept is broader than any one vendor. It reflects the broader industry shift toward treating AI as a production system.

This leads to a common question: Why did AI factories emerge? The simple answer is that AI workloads don’t behave like most enterprise applications.

AI relies heavily on parallel processing; it repeatedly accesses large volumes of data and rarely sits idle. Models are constantly being trained, adjusted, redeployed, and queried. That puts pressure on infrastructure in ways traditional data centers weren’t designed to handle.

AI factories were created because forcing these workloads into old-school environments is inefficient, complex, and creates unpredictable costs. Simply put, purpose-built systems better align the infrastructure with how AI actually operates day to day.

How Traditional Data Centers Were Designed

The traditional data centers we know today are a product of how enterprise IT evolved over the years. They were created to support databases, internal systems, and web services, often for multiple teams simultaneously. The idea was to create stability, predictability, and efficient resource sharing.

In most traditional data centers, CPUs are the default compute layer, and accelerators are optional. Storage and compute are loosely coupled, and workloads are typically scheduled rather than continuous. In addition, security assumes that data is mostly static or transactional.

The traditional data center isn’t dead; the design still works well for many use cases. But it wasn’t built with modern AI as the primary use case.

AI Factories vs Traditional Data Centers: Where the Differences Show Up

The real differences between traditional data centers and AI Factories become obvious when you look at how the environments behave.

This is why comparing AI factories to traditional data centers tends to feel less like an upgrade of conversation and more like an architectural fork on the road.

Specifically, AI systems are sensitive to distance. In other words, moving large datasets or model weights across networks creates latency and increases costs and operational overhead. Over time, teams realize that AI workloads run more efficiently when data, models, and compute are kept close together.

AI factories are built around that reality and deliberately centralize:

- Training and fine-tuning datasets

- Model weights and inference services

- Orchestration and performance monitoring

From a performance standpoint, this makes sense. But from a risk standpoint, it raises the stakes.

How Security Expectations Change in AI Factories

Data center security is its own animal. In most cases, once a workload is authorized and inside the environment, it’s generally trusted.

AI factories turn this assumption on its head.

During AI processing, data must be decrypted in memory, models must be fully accessible, and intermediate outputs must be generated continuously. That means that data is exposed while it’s in use, not just when it’s stored or transmitted. It’s a different threat that most network controls alone don’t fully address.

As a result, AI factories tend to place more emphasis on:

- Protecting data and models during execution

- Verifying workloads before they run

- Reducing implicit trust in operators or infrastructure

These concerns are less pronounced in traditional data centers, where workloads are usually more isolated and less concentrated.

So, when does an AI factory actually make sense?

In truth, not every organization needs an AI factory today. Traditional data centers or managed cloud services are still a sound choice for many teams. AI factories should only come into play if AI is more central to operations.

Organizations start looking at AI factories when they’re ready to run large, continuous AI workloads or train and fine-tune their proprietary models. They’re a good fit for businesses that work with sensitive or regulated data, or for those operating under data residency or sovereignty requirements.

With this backdrop, it makes sense that early adopters include governments, telecom providers, financial institutions, healthcare organizations, and researchers.

Different Infrastructure for Different Problems

To be clear: AI factories and traditional data centers are not competing in versions of the same thing.

Traditional data centers are designed to reliably host a wide range of web applications software. AI factories are designed to continuously produce intelligence. As AI moves closer to the core of business and public-sector operations, that distinction matters more than it used to.

Key points to remember:

- Traditional data centers are general-purpose by design

- AI factories are designed and optimized for AI-first workloads

- Centralization improves AI performance but also increases risk

- Security models need to evolve as AI workloads become centralized

- The quality of your infrastructure often influences AI outcomes

As AI systems grow more capable and more autonomous, the infrastructure decisions behind them will play a larger role in what organizations can safely and effectively do with AI.

Learn More About Securing AI Factories

As organizations explore AI factories, many also rethink how sensitive data and models are protected during AI processing (not just before or after). To learn more about approaches for securing AI factories with verifiable trust, request a demo or contact us to continue the conversation.