E-commerce, the buying and selling of goods and services over the internet, moves fast and is highly connected, which makes it both powerful and risky. As supply chains, payments, and marketplaces become more tightly coupled, external disruptions can quickly turn into business problems.

To stay ahead, organizations rely on AI-driven risk analysis to turn external signals and internal operational data into timely, executive-level insights. However, this shift creates a paradox: as insights get more detailed and useful, the data they rely on becomes more sensitive.

Resolving this paradox, i.e., extracting intelligence without exposing proprietary business knowledge, has become a defining challenge of modern e-commerce risk management.

The e-commerce landscape

E-commerce refers to the digital buying and selling of goods and services, a landscape that has reshaped global markets and consumer behavior. It encompasses multiple operational models such as Business-to-Business and Business-to-Consumer, each supported by large-scale digital platforms, often powered by advanced B2B commerce software.

These platforms do more than sell products online; they connect payments, logistics, inventory, and customer interactions across many systems. The economic scale of e-commerce is substantial and continues to expand.

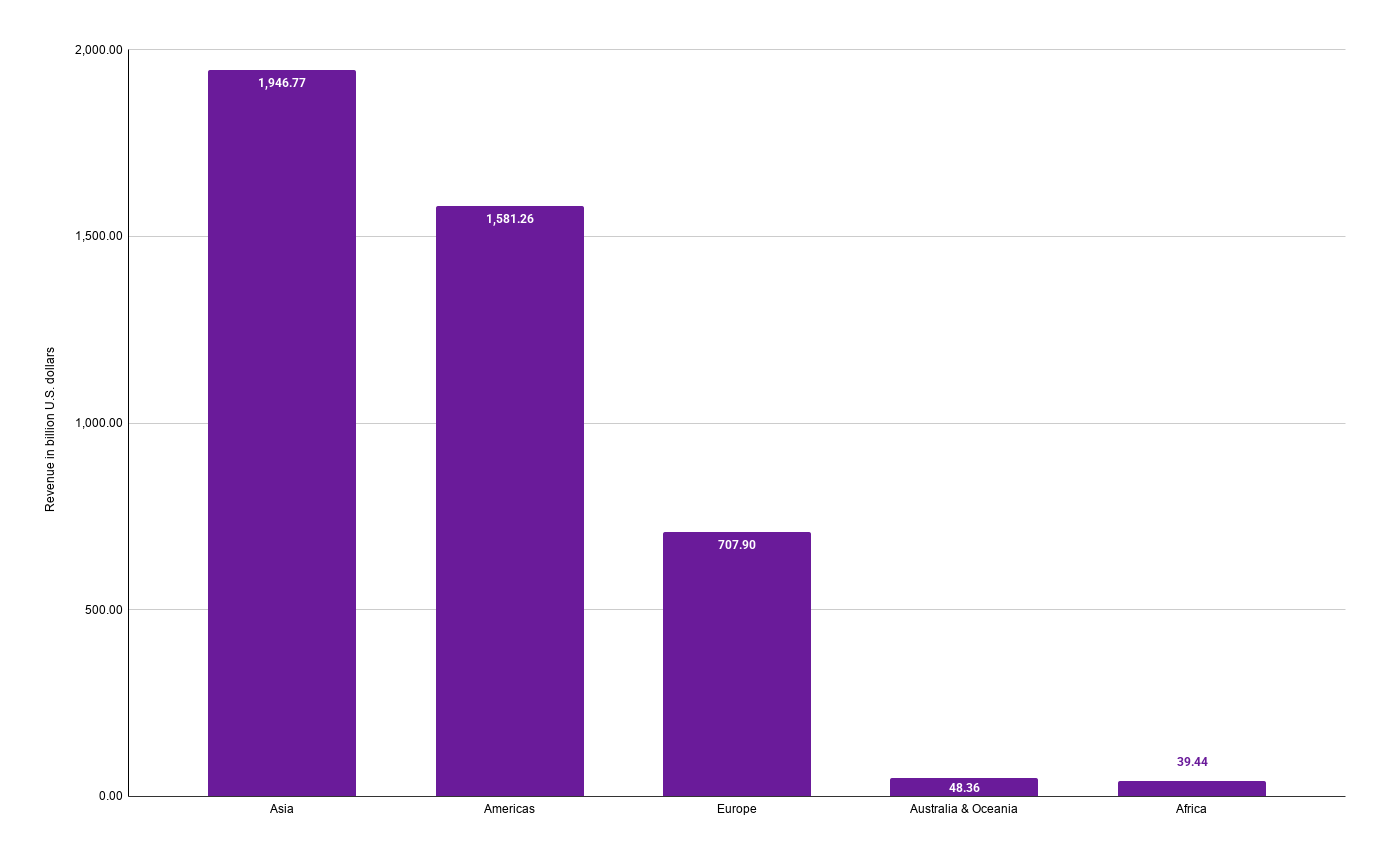

In 2025, global retail e-commerce revenue was led by Asia at approximately USD 1.95 trillion, followed by the Americas at USD 1.58 trillion and Europe at USD 0.71 trillion. [source]

This rapid growth has also increased system complexity and operational exposure. Handling many transactions in real time across global markets brings risks such as fraud, payment problems, supply-chain disruptions, and compliance issues.

As a result, modern e-commerce platforms increasingly rely on advanced analytics, machine learning, and large language models (LLMs), to support risk assessment, anomaly detection, and automated decision workflows.

However, the reliance on data-intensive AI systems raises new concerns around data confidentiality, model integrity, and trust, creating a need for secure ways to run this analysis, like confidential computing.

Large language models for risk detection in e-commerce

To manage the increasing complexity of global e-commerce including operations within a dropshipping business modern risk management systems need to interpret large amounts of unstructured data, from news articles and regulatory updates to internal operational logs.

LLMs provide a new approach to achieve this by leveraging deep learning to understand and generate human-like text. LLMs capture contextual relationships across entire documents which allows them to identify patterns and infer meaning even in complex, information-dense reports.

To make LLMs actionable for risk analysis, several techniques are commonly applied:

- Few-shot and zero-shot prompting: By providing just a few examples (few-shot) or descriptive instructions (zero-shot), the LLM model can perform specific classification or summarization tasks without requiring extensive retraining. For instance, a prompt might instruct the model to classify a news report as a “financial risk”, “geopolitical risk”, or “supply-chain disruption”.

- Fine-tuning and instruction-tuning: Organizations can adapt pre-trained LLMs to their specific domain. Fine-tuning adjusts the model weights using domain-specific datasets, while instruction-tuning teaches the model to follow structured guidance, producing outputs that align with operational needs.

- Retrieval-augmented generation (RAG): The LLM can access external knowledge sources such as internal databases, supplier reports, or market intelligence stories via a retrieval mechanism. The retrieved documents are then combined with the model’s generative capabilities to produce accurate, context-aware narratives. This approach ensures that risk summaries incorporate both historical context and real-time information without storing sensitive data in the model itself.

By using these capabilities, LLMs can produce two concrete benefits for e-commerce risk management:

- Analytical risk detection: LLMs can classify threats into structured categories, flagging issues such as supply-chain bottlenecks, financial volatility, regulatory non-compliance, or governance failures.

- Narrative synthesis: Beyond classification, LLMs can generate executive-ready reports that summarize complex risk data and provide actionable insights.

However, the very features that make LLMs powerful also make them sensitive: they process confidential prompts, internal metrics, supplier metadata, and proprietary knowledge databases. Any exposure or tampering during inference, or RAG retrieval could harm competitive intelligence, disrupt operations, and risk sensitive data being leaked.

This motivates the use of Confidential Computing, which protects data in use and ensures that LLM-based risk insights remain secure, private, and trustworthy across the entire AI pipeline.

Securing LLM-based e-commerce risk detection with confidential computing

LLM-based risk detection handles sensitive data at every stage of the process, from internal supply-chain metrics and strategic prompts to external news and knowledge databases accessed through retrieval-augmented generation (RAG).

During inference, the model processes this information to generate analytical classifications (analytical risk detection) and narrative summaries (narrative synthesis). Without additional protections, these stages could expose proprietary information or compromise the integrity of the resulting insights.

The Fortanix confidential computing technology provides a solution to this problem by keeping data encrypted while it is actively being used, ensuring that LLM computations, including prompt processing, embedding creation, and RAG-based retrieval, remain fully secure. This allows organizations to generate actionable risk insights without ever exposing sensitive corporate data.

With Fortanix confidential computing all layers of the LLM workflow are protected:

- Protecting the prompt: Few-shot or zero-shot prompts guide the LLM’s processing. Confidential computing ensures these instructions, which often contain proprietary business logic, remain confidential during tokenization, embedding, and inference.

- Securing retrieval-augmented knowledge: Knowledge bases accessed during RAG remain encrypted and accessible only within trusted execution environments (TEEs). This keeps supplier data, operational metrics, and strategic intelligence secure while still enabling the model to produce context-rich risk outcomes.

- Ensuring narrative integrity: Confidential computing guarantees that the generated insights are authentic, produced by authorized code on untampered hardware. This provides an assurance that executive-ready reports are accurate and untampered.

By integrating confidential computing directly into LLM workflows, e-commerce platforms can turn AI analytics into secure, trustworthy e-commerce risk detection systems, enabling decision-makers to act confidently on AI-generated insights without exposing sensitive business information.

Conclusion: Building trustworthy AI for e-commerce risk detection

As e-commerce continues to grow in scale and complexity, the stakes for supply chain, financial, and regulatory risk management keep increasing. LLMs are a powerful tool for identifying emerging threats and producing executive-ready risk reports.

Unfortunately, these capabilities stem from extensively using sensitive data. Without strong security protections, insights can be exposed, proprietary data leaked, and organizations may face operational problems, compliance issues, lawsuits, and reputational damage.

Fortanix confidential computing addresses this challenge by protecting data while it is in use, ensuring that prompts, confidential internal metrics, knowledge bases, and model outputs remain secure, private, and trustworthy.

By combining the analytical intelligence of LLMs with hardware-enforced security, organizations can utilize LLM-based e-commerce risk detection processes to streamline decision-making.